On this tutorial, we discover LitServe, a light-weight and highly effective serving framework that permits us to deploy machine studying fashions as APIs with minimal effort. We construct and check a number of endpoints that display real-world functionalities resembling textual content era, batching, streaming, multi-task processing, and caching, all working domestically with out counting on exterior APIs. By the tip, we clearly perceive methods to design scalable and versatile ML serving pipelines which are each environment friendly and simple to increase for production-level functions. Take a look at the FULL CODES right here.

!pip set up litserve torch transformers -q

import litserve as ls

import torch

from transformers import pipeline

import time

from typing import Record

We start by organising our surroundings on Google Colab and putting in all required dependencies, together with LitServe, PyTorch, and Transformers. We then import the important libraries and modules that may enable us to outline, serve, and check our APIs effectively. Take a look at the FULL CODES right here.

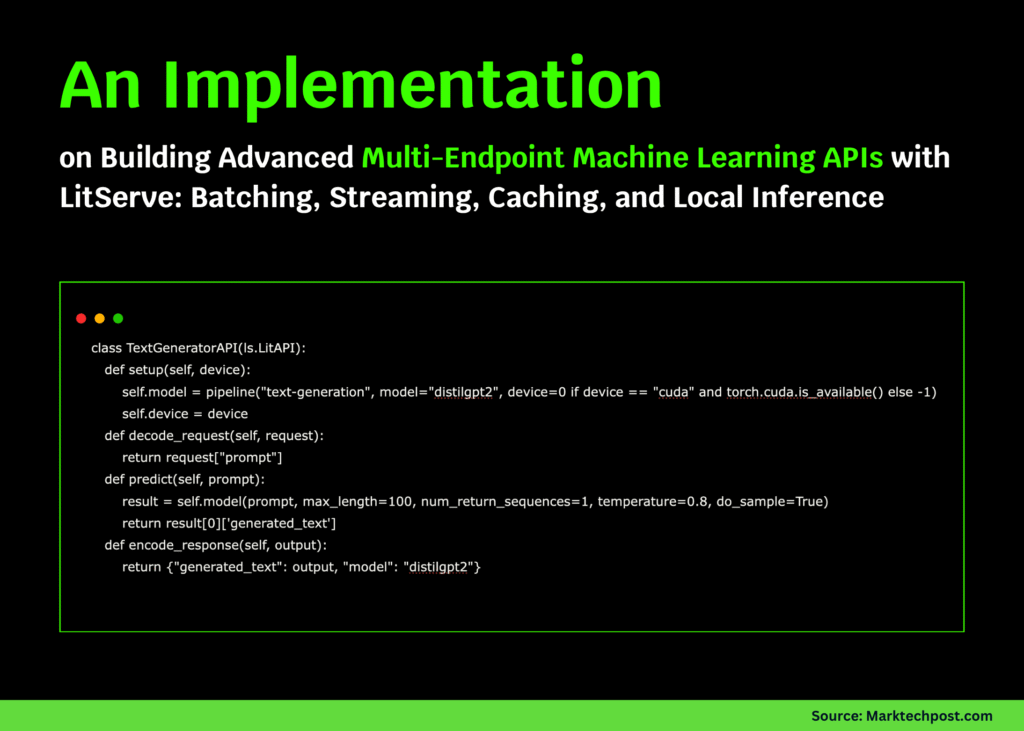

class TextGeneratorAPI(ls.LitAPI):

def setup(self, machine):

self.mannequin = pipeline(“text-generation”, mannequin=”distilgpt2″, machine=0 if machine == “cuda” and torch.cuda.is_available() else -1)

self.machine = machine

def decode_request(self, request):

return request[“prompt”]

def predict(self, immediate):

outcome = self.mannequin(immediate, max_length=100, num_return_sequences=1, temperature=0.8, do_sample=True)

return outcome[0][‘generated_text’]

def encode_response(self, output):

return {“generated_text”: output, “mannequin”: “distilgpt2”}

class BatchedSentimentAPI(ls.LitAPI):

def setup(self, machine):

self.mannequin = pipeline(“sentiment-analysis”, mannequin=”distilbert-base-uncased-finetuned-sst-2-english”, machine=0 if machine == “cuda” and torch.cuda.is_available() else -1)

def decode_request(self, request):

return request[“text”]

def batch(self, inputs: Record[str]) -> Record[str]:

return inputs

def predict(self, batch: Record[str]):

outcomes = self.mannequin(batch)

return outcomes

def unbatch(self, output):

return output

def encode_response(self, output):

return {“label”: output[“label”], “rating”: float(output[“score”]), “batched”: True}

Right here, we create two LitServe APIs, one for textual content era utilizing a neighborhood DistilGPT2 mannequin and one other for batched sentiment evaluation. We outline how every API decodes incoming requests, performs inference, and returns structured responses, demonstrating how simple it’s to construct scalable, reusable model-serving endpoints. Take a look at the FULL CODES right here.

class StreamingTextAPI(ls.LitAPI):

def setup(self, machine):

self.mannequin = pipeline(“text-generation”, mannequin=”distilgpt2″, machine=0 if machine == “cuda” and torch.cuda.is_available() else -1)

def decode_request(self, request):

return request[“prompt”]

def predict(self, immediate):

phrases = [“Once”, “upon”, “a”, “time”, “in”, “a”, “digital”, “world”]

for phrase in phrases:

time.sleep(0.1)

yield phrase + ” ”

def encode_response(self, output):

for token in output:

yield {“token”: token}

On this part, we design a streaming text-generation API that emits tokens as they’re generated. We simulate real-time streaming by yielding phrases separately, demonstrating how LitServe can deal with steady token era effectively. Take a look at the FULL CODES right here.

class MultiTaskAPI(ls.LitAPI):

def setup(self, machine):

self.sentiment = pipeline(“sentiment-analysis”, machine=-1)

self.summarizer = pipeline(“summarization”, mannequin=”sshleifer/distilbart-cnn-6-6″, machine=-1)

self.machine = machine

def decode_request(self, request):

return {“activity”: request.get(“activity”, “sentiment”), “textual content”: request[“text”]}

def predict(self, inputs):

activity = inputs[“task”]

textual content = inputs[“text”]

if activity == “sentiment”:

outcome = self.sentiment(textual content)[0]

return {“activity”: “sentiment”, “outcome”: outcome}

elif activity == “summarize”:

if len(textual content.cut up()) < 30:

return {“activity”: “summarize”, “outcome”: {“summary_text”: textual content}}

outcome = self.summarizer(textual content, max_length=50, min_length=10)[0]

return {“activity”: “summarize”, “outcome”: outcome}

else:

return {“activity”: “unknown”, “error”: “Unsupported activity”}

def encode_response(self, output):

return output

We now develop a multi-task API that handles each sentiment evaluation and summarization by way of a single endpoint. This snippet demonstrates how we are able to handle a number of mannequin pipelines by a unified interface, dynamically routing every request to the suitable pipeline based mostly on the desired activity. Take a look at the FULL CODES right here.

class CachedAPI(ls.LitAPI):

def setup(self, machine):

self.mannequin = pipeline(“sentiment-analysis”, machine=-1)

self.cache = {}

self.hits = 0

self.misses = 0

def decode_request(self, request):

return request[“text”]

def predict(self, textual content):

if textual content in self.cache:

self.hits += 1

return self.cache[text], True

self.misses += 1

outcome = self.mannequin(textual content)[0]

self.cache[text] = outcome

return outcome, False

def encode_response(self, output):

outcome, from_cache = output

return {“label”: outcome[“label”], “rating”: float(outcome[“score”]), “from_cache”: from_cache, “cache_stats”: {“hits”: self.hits, “misses”: self.misses}}

We implement an API that makes use of caching to retailer earlier inference outcomes, decreasing redundant computation for repeated requests. We monitor cache hits and misses in actual time, illustrating how easy caching mechanisms can drastically enhance efficiency in repeated inference situations. Take a look at the FULL CODES right here.

def test_apis_locally():

print(“=” * 70)

print(“Testing APIs Regionally (No Server)”)

print(“=” * 70)

api1 = TextGeneratorAPI(); api1.setup(“cpu”)

decoded = api1.decode_request({“immediate”: “Synthetic intelligence will”})

outcome = api1.predict(decoded)

encoded = api1.encode_response(outcome)

print(f”✓ Consequence: {encoded[‘generated_text’][:100]}…”)

api2 = BatchedSentimentAPI(); api2.setup(“cpu”)

texts = [“I love Python!”, “This is terrible.”, “Neutral statement.”]

decoded_batch = [api2.decode_request({“text”: t}) for t in texts]

batched = api2.batch(decoded_batch)

outcomes = api2.predict(batched)

unbatched = api2.unbatch(outcomes)

for i, r in enumerate(unbatched):

encoded = api2.encode_response(r)

print(f”✓ ‘{texts[i]}’ -> {encoded[‘label’]} ({encoded[‘score’]:.2f})”)

api3 = MultiTaskAPI(); api3.setup(“cpu”)

decoded = api3.decode_request({“activity”: “sentiment”, “textual content”: “Superb tutorial!”})

outcome = api3.predict(decoded)

print(f”✓ Sentiment: {outcome[‘result’]}”)

api4 = CachedAPI(); api4.setup(“cpu”)

test_text = “LitServe is superior!”

for i in vary(3):

decoded = api4.decode_request({“textual content”: test_text})

outcome = api4.predict(decoded)

encoded = api4.encode_response(outcome)

print(f”✓ Request {i+1}: {encoded[‘label’]} (cached: {encoded[‘from_cache’]})”)

print(“=” * 70)

print(“✅ All assessments accomplished efficiently!”)

print(“=” * 70)

test_apis_locally()

We check all our APIs domestically to confirm their correctness and efficiency with out beginning an exterior server. We sequentially consider textual content era, batched sentiment evaluation, multi-tasking, and caching, making certain every part of our LitServe setup runs easily and effectively.

In conclusion, we create and run various APIs that showcase the framework’s versatility. We experiment with textual content era, sentiment evaluation, multi-tasking, and caching to expertise LitServe’s seaMLess integration with Hugging Face pipelines. As we full the tutorial, we notice how LitServe simplifies mannequin deployment workflows, enabling us to serve clever ML methods in only a few traces of Python code whereas sustaining flexibility, efficiency, and ease.

Take a look at the FULL CODES right here. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be happy to comply with us on Twitter and don’t overlook to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be part of us on telegram as properly.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its recognition amongst audiences.

🙌 Comply with MARKTECHPOST: Add us as a most well-liked supply on Google.